Ethics Case Controversy

There are few people in the world who are unaware of Facebook. With over two and a half billion monthly users (about one third of the planet’s population), Facebook is the most popular social media site on the Internet. However, as many people know, the company’s success has also come with a seemingly endless stream of controversies dating back to its inception in 2006. This includes a multitude of user data leaks, government investigations, unethical practices, and so much more. However, Facebook (who recently changed their name to Meta) is currently under fire following the release of the Facebook Papers: internal documents detailing a number of wrongdoings being

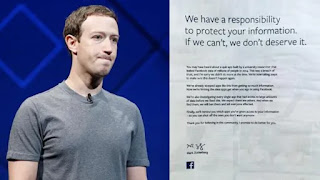

Zuckerberg testifying to Congress about

the 2018 Cambridge Analytica Scandal

committed by Facebook. Although this alone is enough to spark outrage, one of the most recent developments has been a focal point of the controversy. This being Facebook’s order to employees to lock down any internal documents from the company from now dating back to 2016 (Mac & Issac 2021).

Although the focus of this analysis is occurring in the present, the events leading up to it were set in motion long ago. Over the past five years, Facebook has been the center of an incalculable amount of data leak scandals involving their own company as well as their users. Information such as email addresses, ‘private’ communications and even intimate personal information have been leaked (Yadav 2019). On top of this, many organizations have spoken out against the social media giant claiming that its website serves as a host of harmful, hateful, divisive, and misleading information. Perhaps the most notable of these being The Guardian’s publishing of ‘The Facebook Files’ in 2017. Negative claims against the company’s moderation process and role as a social media site prompted investigation by The Guardian which gathered testimony from Facebook employees as well as other vital sources that show how flawed the company’s practices were. One upsetting example involved the lack of training Facebook moderators received before working to keep harmful content such as cyberterrorism and child pornography off the site. “Workers exposed to such content should have extensive resiliency training and access to counsellors, akin to the support that first-responders receive. However, testimony from those working to keep beheadings, bestiality and child sexual abuse images off Facebook indicates that the support provided isn’t enough” (Solon 2017). Not only was Facebook a host for vile content, the efforts they were taking to combat such content was harming their own employees.

Another scandal that recently ravaged Facebook was the Cambridge Analytica breach. Occurring in March of 2018, this involved the acquisition of the data of over 50 million Facebook users by political consultancy Cambridge Analytica. The company then used this data to manipulate the results of the 2016 presidential election. “‘We exploited Facebook to harvest millions of people’s profiles,’ whistleblower Christopher Wylie told The Observer. ‘And built models to exploit what we knew about them and target their inner demons. That was the basis the entire company was built on’” (Chen & Potenza 2018). Evidently, the company was continuing its shady practices and repeatedly falling short in protecting its employees and the privacy of its users. As the years went on, scandals such as these only became more frequent.

Most recently and perhaps most significantly, Facebook is under investigation from the government after former Facebook Frances Haugen blew the whistle on her former employer’s knowledge of the terrible impact it was having on people. Over the course of the past few months, Haugen has exposed information regarding Facebook’s special treatment of influencers and celebrities, algorithm alterations to increase the divisive nature of the platform, and the overwhelmingly negative impact of Instagram (a Facebook site) on the mental health of teenage girls (Philipose 2021). What the Facebook Papers intended to show people was that the company was actively working to boost its public image and profits with absolutely no regard for their users’ and the public’s wellbeing. This information was not externally acquired, in fact it was gathered in audits and research done by the company itself (Horwitz, 2021).

What does this have to do with Facebook ordering its employees to lock down their internal information now? Quite a lot as a matter of fact. Facebook is currently focused on damage control. Everyday it becomes more difficult to deny that they are completely free of guilt in this case. This is why they are warning employees to keep information locked down. The most damaging claims against Facebook have time and time again proven to be those made by people on the inside. Whether it be Christopher Wylie from the Cambridge Analytica Scandal or Frances Haugen from the currently unfolding situation. Facebook understands the role their employees can play in exposing their practices that are harmful for their public image or potentially illegal. “‘These are the actions of a company attempting to resist scrutiny, not embrace transparency,’ Senator Richard Blumenthal, a Democrat of Connecticut who has led a Senate subcommittee inquiry into Facebook, wrote in a letter to Mark Zuckerberg, Facebook’s chief executive about the action” (Mac & Issac 2021). The action they are taking now clearly comes from a place of self preservation. They are not looking to apologize or make things right, they are simply looking to walk away from this situation the same way they have with previous scandals; with a fine that is miniscule compared to the size of their company and a slap on the wrist.

While Facebook has not defined any sort of punishment for violating this order, which is referred to formally as a legal hold, it is easy to infer that doing so would not be great for the employee. Facebook’s actions have clearly put employees in an extremely vulnerable position. On one hand they face losing their job for exposing wrongdoing that may or may not be legal but is certainly harming people, and on the other hand they face being held liable for concealing information that is vital to an ongoing investigation. An offense like this is something that would surely get someone in a whole heap of legal trouble. Whether Facebook is able to avoid regulation or not, it certainly won’t mean the end of their company. The same can’t be said about their employees’ careers. Although Facebook has been able to weather many controversies in the past, employees can only hope that this one will be a turning point for the company that allows them to feel safe doing their job.

Timeline of Events Pertaining to this Controversy

Stakeholders

The stakeholders involved in this particular Facebook controversy would be Facebook as a company, Facebook’s employees, Facebook’s users, the whistleblowers, and the government. The way that this controversy ends up being handled will set the stage for Facebook’s future as the dominant social media website. Clearly, executives such as Mark Zuckerberg would be implicated. If more damning information gets leaked or the government/Congress uncovers malpractice, the company could be devastated. Harsh regulations could be imposed on the way Facebook is allowed to operate. In fact, depending on the extent of the wrongdoing, the company could be disbanded entirely. Either way, the company’s public image is in jeopardy. Next we have Facebook’s employees. These stakeholders are in the most difficult position. If they adhere to Facebook’s orders, they may be participating in a legal hold that leaves them in good standing with the company (thus protecting their job), or they may be obstructing an investigation by hiding illegal activity. Additionally, they risk losing their job if they disclose wrongdoing, whether it be illegal or just unethical. Facebook’s users are absolutely at risk in this case. For one thing, they may be at risk for becoming victims of the social media company’s negative effects such as manipulation through misinformation, damage to mental health, or manufactured psychological addiction. More simply, they may be at risk for losing access to a social media site they enjoy using. With nearly three billion users, this is quite a large group of stakeholders. Then there are the whistleblowers such as Wylie and Haugen. These people stand to have their careers ruined if their efforts don’t serve to make any impact. They will simply be seen as disloyal employees. However, if their testimony helps bring regulation to Facebook, they will have made a huge impact on the future of the Internet. Finally, there is the government. This includes Congress and any authorities looking to regulate Facebook. In this case, they are responsible for adequately investigating a company with a very checkered history. If they fumble the investigation and let Facebook off easy, they could lose the trust and respect of the people they are sworn to advocate for. More importantly they risk letting what is essentially one of the most globally influential companies in history go unchecked which could have potentially disastrous effects.

Individualism

The theory and lens of individualism has been used over a number of years as both Friedman and Machan have both recognized this theory. Looking at the case of Facebook through the Individualist Theory, the goal of this theory is for an organization to maximize its’ profit for stakeholders by any means within the law. With this being said the company of Facebook inc. has had some very impressive earnings over the past few years, except not at the pleasure of the stakeholders. Rather it has put people in a place of risk and made life profiting for the company more difficult along the way.

Looking through Friedman’s scope of ethics he would not completely agree with what Facebook has done but can agree on some things. This is due to Friedman looking for the absolute most profit for an organization. Friedman’s Individualism, he believes that the only goal of business is to profit. This leaves the sole obligation that the business person has is to maximize profit for the owner or the stockholders within the law. On the other hand, Machan would most likely look at the situation slightly differently due to his libertarian views on Individualism. His view states;

“Whether they are individual people or the stockholders of a publicly-traded company. The business has no direct responsibility to any other interest-groups, which are often called stakeholder groups in the context of business. Employees, customers, suppliers, and the societies that the business impacts are all examples of stakeholders when they are affected by the business’s decisions”.

Even though it has been exposed that Facebook values profit over people, at what expense are they holding these values. If they care too much about profits it would begin to go against Individualism theory. Even with all these views of Individualism within a business in mind it’s hard to argue in favor of Facebook. As they did not do what was in favor of their stakeholders and they technically did not stay within the law if found guilty of some of things they have been accused of.

Individualism could be looked at in this case as a means of a fair and balanced view. The reasoning behind this is because it looks at both the profit for the organization while also taking a slight step back into ethics by making sure that the organization isn’t breaking any laws. The only part the theory falls short is the gap in between illegal or just unethical.

An example of how facebook was unethical looking through the individualist lens is their many data privacy breaches in their history. It would be one thing if they were actively trying to keep data secure and keep these breaches from happening, however they are not. Documents from within Facebook leaked that showed that they were running a “secretive global lobbying operation targeting hundreds of legislators and regulators” in order to get rid of data privacy laws. This is because this would benefit them exponentially however, at their customers detriment, by selling their personal information. This is considered unethical by individualism because they were focused 100% on their profits here without a care as to how getting rid of data privacy laws would affect their customers. Which proves that in a lens that is for profit and pro-company that they were undeniably in the wrong.

Utilitarianism

From a utilitarian perspective, Facebook has drastically mishandled this controversy as well as the events that have led up to it. Utilitarianism is an ethical theory that focuses on the maximization of happiness for all sentient beings capable of feeling it. This applies to the short term and long term effects of an action. With many of Facebook’s actions surrounding this case, they have done just the opposite of maximizing happiness for practically all stakeholders involved.

Facebook (Company): Ultimately, it is Facebook and its executives such as Zuckerberg themselves who have dug the hole that they are currently stuck in. Instead of doing their absolute best to maximize the success of their own company through ethical practices, they disregarded the public good to maximize their profits and therefore focused on their own happiness. In fact, they actively made their users miserable because it kept people on the site longer which, in turn, made them more money. Sure, they may argue that their site makes people happy by allowing them to connect with others but in the long run its effects are proving to be overwhelmingly negative. This will be revisited in the user section. Now Facebook is feeling the consequences of their selfishness and greed. Had they focused on making a user experience that was safe and healthy for everyone, they would have a more secure and more accepted business free from endless controversy. More presently, had they been more transparent and open with investigators, they could receive help on making their platform a place that could maximize happiness for everyone. Instead shut everyone out and conceal vital information that might help in achieving the aforementioned goal (Dwoskin et al. 2021).

Facebook Employees: Happiness is sure to be at an all time low for Facebook employees. For all they know their job and their career as a whole are at large for the sake of Facebook’s profits. Facebook’s order to preserve information has had a profoundly negative impact on employees' happiness. Not only do they have to worry about losing their job, they must also worry about possibly facing legal action if they conceal the wrong information. This is bound to be incredibly stressful and either choice has bad outcomes for these employees. A utilitarian would be appalled by the way Facebook is treating its employees.

Facebook Users: The long term versus short term effects are the primary reason why utilitarianism goes against the way Facebook is manipulating its users. In some cases Facebook is giving users very short term happiness by triggering the release of dopamine with a notification or a like or a cool post. However, in the long run this leads to a social media addiction that can even result in withdrawal symptoms without constant use. Additionally, a lot of Facebook’s strategy revolves around increasing divisiveness and anger on its platform. The company determined that this keeps people coming back and keeps them on the site longer (Ghosh & Scott 2018). Overall, Facebook currently sees sad or angry or unhappy customers as more valuable than happy ones and it will therefore stay designed to keep customers that way.

Whistleblowers: The whistleblowers in this case may not all be coming from a utilitarian standpoint but their actions certainly are utilitarian. These people saw a company actively committing wrongdoing that resulted in the suffering of potentially billions of people. Instead of sitting by and watching, they stood up and did something about it, putting their own careers at risk. In doing so, they hoped to make a difference that allowed for the maximization of happiness for the maximum number of people involved in this case. Even if it was bad for Facebook, it may stand to help billions of people become happier. Facebook whistleblower Frances Haugen discussing

the experiences under her former employer

The Government: The government is the only other saving grace for utilitarians in this case. They are the only force that appears to be attempting to maximize happiness for people. After the whistleblowers stood up the government saw that people were suffering and started an investigation that will hopefully put a stop to it. Although the government does have a shady history with large companies and corporations such as Facebook it does truly seem that a concerted effort is being made to impose regulation that will make social media a better place for everyone. This will ultimately be incredibly beneficial to the maximization of happiness for countless individuals.

Undeniably, Facebook is wrong to continue with its current practices from a utilitarian point of view. Over its fifteen year history it just seems to get worse. Year after year more people seem to be negatively affected by the company than positively and it is simply a shame. All those involved can only hope that action is taken to help Facebook become a force that maximizes happiness for everyone instead of destroying it.

Kantianism

Kantianism is defined as acting rationally, allowing and helping people make rational decisions, respect people and their autonomy and their individual needs and differences, and being motivated by Good Will. Act rationally means to not act inconsistent with your own actions and do not think that the rules don’t apply to you but they apply to everyone else. In Kantianism you also have to think about the Formula of Humanity. The Formula of Humanity says “Act in such a way that you treat humanity, whether in your own person or in the person of another, always at the same time as an end and never simply as a means.” This is saying that everyone is valuable and able to make their own rational decisions. There should also be no lying, cheating, stealing, or deceiving because it disrespects people and treats them like a mere means. An example for that would be to say you are selling something and you willingly withhold information to the customer that would make them rethink their purchase in order to get them to purchase your product. That is against what Kant is saying.

Kant would deem Facebook’s way of forcing their employees to keep quiet in order to save themselves impermissible. This is because they are deceiving themselves and the people that use their website by trying to make them think nothing bad is going on in this whole case. They are also lying to the people who use their website and platform saying that they are trying to fix the problem when really, they are doing nothing but just making it an act by putting a legal hold on the documents since 2016. Right off the bat, the Facebook Papers that started this whole case, repeatedly shows how Facebook was lying, deceiving, and disrespecting its users. Mark Zuckerberg, and other employees of Facebook, continue to lie and lie about what is going on within his own platform. Authors of the article, “Only Facebook knows the extent of its misinformation problem. And its not sharing, even with the White House”, note how Facebook has taken recent action in restricting researcher’s access to their data that they once offered with open arms. This comes not long after significant connections to health and election misinformation and Facebook were discovered. As the White House and other parties put pressure on Facebook to explain why data access is being revoked or heavily restricted, they simply put forth misleading or untruthful reports about their own investigations into misinformation or claim that all the data researchers need is already available to them. A common strategy of Facebook throughout its many instances of controversy has been to throw large amounts of seemingly meaningless data at the authorities. The data has very little context to it and is practically useless, however it gives the company a way to call themselves transparent while maintaining their coverup operation (Dwoskin, Zakrewski, Pager). Facebook is lying to its users by posting things that lead them to be misinformed. Not only that, they aren’t doing anything to stop the fake news, so that could be considered them being lied to. By hiding the research they have conducted on their company from the public and investors, they are not being respected as someone who would want to know about what is going on in a company, especially the people who are investing and need to know if they should pull their money out from the investments. They are withholding information in order to get more and more people to use their app without giving them the knowledge of the things they are doing that some people would acknowledge as wrong doings.

Virtue Theory

Unlike other ethical theories, virtue theory does not have a set of rules to follow: the theory is practiced by attempting to be a good person, then actions will effortlessly come after. This theory questions whether a person can distinguish what is right and wrong. However, knowing righteousness is simply not enough, therefore he/she must act and practice to be virtuous. In the case of Facebook, we may use four characteristics of the theory and analyze the morality of the company. The four characters are Courage, Temperance, Justice, and Honesty.

Courage

In short, courage in business ethics indicates a company’s willingness to take risks: the ability to face challenges and admit reality for the sake of rightness and rectitude. Facebook, as noted, has been recognizing its impacts on society, but it has never shown its courageous actions. Many studies and professionals concluded that the platform creates a disturbing effect on users, and the effect is even more visible when it comes to politics. Facebook itself admits Instagram or any photo/video-sharing social media adversely affects teenage girls (Philipose, 2021). However, preventing those issues would harshly decrease the company’s profits, simply because the platform would have less attractive content and fewer viewers (Silberling, 2021). In other words, as of now, Facebook is choosing money over the safety of users. Therefore, the company fails to achieve courage by nonapplication of moral values in actions.

Temperance

Temperance is also an important character to evaluate the case. The great profit tempts Facebook to ignore and devalue the safety of users. The company is losing control to enforce more restrictive policies and rules to protect users from skewed information. The company must recognize and respond to its violation of ethics, even though such critical actions decrease the profit.

Justice

Justice in business ethics means the value of equally treating individuals and the effort of producing good quality products. Facebook does not treat its users in the right ways but does treat them as their source of revenue. The quality of service they provide is not promised as the company insists, rather the service is creating harm among users and stakeholders (The Social Dilemma). The company failed to have justice, showing its negligence to overlook the malpractice. Facebook continues hurting users for the sake of money.

Honesty

Among the four characters, honesty is the only character Facebook has displayed in the case. Honesty is the characteristic of being truthful to their actions. The company has disclosed its comment on the negative impact of both spreading misinformation and creating skewed body images on their young users (The Social Dilemma). The disclosure of acknowledgment of the issue is significantly crucial in Virtue theory, and the company has succeeded to perform the character.

Finding Means

Facebook overlooks the value of those four characteristics and fails to be virtuous. Facebook overlooks the value of those four characteristics and fails to be virtuous. The company should also find the means within the four characteristics. The balance of those characteristics is essential to become virtuous.

Facebook shows its failure to practice the majority of the four characteristics. The wrongdoings of the company have been putting society at the risk of extreme polarization and psychological harm from misleading information. Facebook acknowledges the uncertainty and manipulation by fear mongering on the platform, yet it executes no further regulations to prevent serious problems. The recognition of righteousness is inadequate. Facebook can only become virtuous when it takes pivotal actions.

Action Plan

Facebook and its controversies have created a very toxic workplace for its employees and an incredibly harmful platform for its users. What began as simply a manner for people to connect to others over the Internet has turned into nothing more than an algorithm that is intentionally manipulating its users to keep them on the app for as long as possible and sell their attention to advertisers. There is a way to fix this, however, but it is going to require Facebook to completely reevaluate the way they conduct their business.

Currently, Facebook is keeping a lot of its data and the manner in which it processes said data under lock and key. Previously, they were involved in numerous efforts to share the massive amounts of data they collect from their billions of users with organizations looking to study it for a number of reasons such as predicting criminal activity, identifying mental health issues, and overall simply studying the way people interact with each other in the setting. In response to controversies, Facebook has ceased sharing their data and keeps it completely internal for fear of it incriminating them in any sort of illegal activity. This is nothing less than counterintuitive. With their efforts, it appears Facebook is attempting to keep their platform running the way it does with no regard for the way it affects people. What they don’t seem to grasp is that people are going to uncover any negative actions they are taking while running their business. Take a look at the Social Dilemma (2020). A number of people were able to work together to make a feature length documentary about what Facebook is doing all while they continue to “preserve” their data. The only way the company can move forward is to once again make their data available to outside organizations so that they can help the company find non-harmful ways to run their business. Additionally, they must stop deploying harmful algorithms that promote addictive and mentally harmful behavior by its users. This would not only help the users but the employees negatively impacted by all the effects they’ve been ordered to take to cover up bad practices.

Zuckerberg's apology letter to users after one

of many Facebook data leaks

Something that could serve to guide Facebook through this change and potentially show users how it has turned a new leaf is to alter its current mission statement. Facebook’s mission statement has slightly changed over the years but it is always essentially “to bring the world closer together.” Something that might be a little more thoughtful and helpful for the near future would be “to bring the world closer together and promote the exchange of ideas in a positive and user-friendly way.” This improves on the old statement because it promises a certain degree of ethical behavior on the part of Facebook and reminds them of their new goal to make their unprecedented influence a positive one.

Facebook’s lack of certain core values have led to it possessing a less than ideal reputation. Some values that it should possess to make it more reputable are user mental health, preventing/stopping the spread of misinformation, innovation, creativity, and a healthy spread of ideas. These values would serve to guide them on improving their company and support their mission statement to show they aren’t all talk. Chiefly, stopping the spread of misinformation and prioritizing user mental health would directly address some of their strongest criticisms.

Monitoring this goes right along with the initial plan. By sharing their data with external organizations it would be very easy to monitor any deviations from their new commitments. For good measure however, Facebook could allow these organizations to do internal examinations similar to how accountants perform audits. This would uncover any wrongdoing or any data that is seemingly being misused. Finally, employee surveys could be done by external organizations that guarantee job safety and anonymity if wrongdoing is reported.

Maybe people won’t use the websites as much as they do now but it would still be something that people use most likely everyday. The popularity of Facebook’s websites is undoubtedly increasing more and more everyday with no end in sight. It is undeniable that advertisers would still find the online real estate the company offers as invaluable despite the scaling back of harmful practices. Help from outside organizations would allow Facebook to develop a clear roadmap on how to gradually scale back its use of practices that are harmful to users without potentially harming their own profits.

References

Mac, Ryan, and Mike Isaac. “Facebook Tells Employees to Preserve All Communications for Legal Reasons.” The New York Times. The New York Times, October 27, 2021. https://www.nytimes.com/2021/10/27/technology/facebook-legal-communications.html.

“15 Years of Facebook, 15 Years of Controversy: A Timeline of Events- Technology News, Firstpost.” Tech2, February 5, 2019. https://www.firstpost.com/tech/news-analysis/15-years-of-facebook-15-years-of-controversy-a-timeline-of-events-6028641.html.

Dwoskin, Elizabeth, Cat Zakrzewski, and Tyler Pager. “Only Facebook Knows the Extent of Its Misinformation Problem. and It's Not Sharing, Even with the White House.” The Washington Post. WP Company, August 19, 2021. https://www.washingtonpost.com/technology/2021/08/19/facebook-data-sharing-struggle/.

Scott, Dipayan Ghosh and Ben. “New Facebook Scandal Shows How Political Ads Manipulate You.” Time. Time, March 19, 2018. https://time.com/5197255/facebook-cambridge-analytica-donald-trump-ads-data/.

Chen, Angela, and Alessandra Potenza. “Cambridge Analytica's Facebook Data Abuse Shouldn't Get Credit for Trump.” The Verge. The Verge, March 20, 2018. https://www.theverge.com/2018/3/20/17138854/cambridge-analytica-facebook-data-trump-campaign-psychographic-microtargeting.

“The Facebook Files.” The Wall Street Journal. Dow Jones & Company, October 1, 2021. https://www.wsj.com/articles/the-facebook-files-11631713039.

Philipose, Rahel. “Explained: Who Is Facebook Whistleblower Frances Haugen, and What Has She Revealed?” The Indian Express, October 13, 2021. https://indianexpress.com/article/explained/who-is-facebook-whistleblower-frances-haugen-7556326/.

“Underpaid and Overburdened: The Life of a Facebook Moderator.” The Guardian. Guardian News and Media, May 25, 2017. https://www.theguardian.com/news/2017/may/25/facebook-moderator-underpaid-overburdened-extreme-content.

Williams, Erika. The Courthouse News Service. 27 October 2021.

https://www.courthousenews.com/shareholders-sue-facebook-following-whistleblower-revelations/

Silberling, Amanda. Tech Crunch. 5 October 2021. https://techcrunch.com/2021/10/05/facebook-whistleblower-frances-haugen-testifies-before-the-senate/?guccounter=1&guce_referrer=aHR0cHM6Ly93d3cuZ29vZ2xlLmNvbS8&guce_referrer_sig=AQAAAJN8hLzBKVVS-KRbrPDyppViZNxpMnCb_Re_dUyNXk8M7GaqBXcpWhd0Aqjv9b-fp0tBUfNN7uPqYhVf2FT3jgGxcEQSADEVGJJv6yk0su-WFVWzaLOfCSwza41yGIzK31r5JLSTBdofmP6D01ZKRIsdHpcs-bLIiSSr9dVgdmiw

Larissa Rhodes, The Social Dilemma, directed by Jeff Orlowski, 26 January 2020, Netflix.

https://www.netflix.com/watch/81254224?trackId=14277281&tctx=-97%2C-97%2C%2C%2C%2C

The Business Ethics Case Manual, Heather Salazar

No comments:

Post a Comment